Pascale Nini: A Visionary Trailblazer

Discover Immervision through Pascale Nini’s profile

Tell us a bit about the company you’re President and CEO of, ImmerVision.

ImmerVision is a global leader in 360o panoramic imaging technology that was founded in 2000 in France. Based in Montreal since 2003, ImmerVision now licenses its patented panomorph optical technology and image processing algorithms to lens producers, product manufacturers, and software developers around the world.

After collaborating with some 35 security camera brands and helping develop over 100 video surveillance software programs worldwide, ImmerVision entered the transportation market in 2017. We’re currently working with several industry players such as LeddarTech, a global leader in LiDAR technologies, to increase the perception and accuracy of vehicle vision systems. It’s really interesting work! And we’ll be making a major global announcement soon, so stay tuned!

ImmerVision was founded in France. Why did you choose Quebec for its development?

At the time, in the early 2000s, France was too far from our market, which was mainly in North America and Asia. Investissement Québec won us over with its R&D tax credits. All the great work they do canvassing and helping companies integrate was really valuable to us during our transition.

What is panoramic viewing and can you give an example of what it can do for the average person in their daily life?

There’s some debate about it, but connected devices, which are becoming more and more common in households, all have miniature cameras that capture and analyze our movements. CCTV cameras for houses and public buildings also use panoramic viewing.

Cameras on cars also use wide-angle viewing. Several cellphone brands, such as Motorola, have also adopted 360o capture, not to mention GoPro cameras, which are becoming increasingly popular worldwide.

What is the optics/photonics industry and why is it important in the development of smart mobility?

It’s intelligent machine vision that takes the data collected by wide-angle cameras and presents it in a way artificial intelligence algorithms can understand. Smart mobility depends 100% on understanding the environment. It’s not just viewing—above all else, it’s understanding what the cameras are seeing. You need all three components of autonomy: perception, resulting from high-level optical quality; data analysis, including the aggregation of information to determine action; and finally, action.

What are the challenges that still need to be addressed in the coming years?

We’re working on better capture, but the challenge is still how to get the information to the artificial intelligence algorithms in real time to gain a better understanding of what the cameras are seeing. We’re working on simplifying these processes so that there’s no delay. That’s the key to self-driving transportation. We call it “data in picture.”

How does it feel to be a supplier for major global transport companies?

We’re a lot smaller than the companies we work with! But they all really need smaller companies like us to advance their technologies. We spark innovation for these large companies. We help them think about technologies they might not otherwise have thought of. We’re very much in the early stages of this type of work.

The ecosystems of these large corporate machines are complex. Being smaller means we can be very responsive. And you shouldn’t be afraid to invest! That’s the key to our credibility.

As a woman, what’s it like for you in the male-dominated world of transportation?

I don’t deny that there are a lot of problems. But I personally have never encountered any obstacles as a woman when marketing our technologies. I never felt that my gender was a disadvantage, so I never thought of it that way.

The world of finance, on the other hand, is a whole other story. You have to work very hard to be taken seriously as a woman. I’ve often felt discrimination, for example, when looking for funding.

What do you think will be the greatest breakthrough in wide-angle imaging in the coming years?

It will be to bridge the gap with artificial intelligence. Today’s viewing is based on narrow-angle coverage. We need to ensure that viewing becomes perception, meaning it provides context for images and algorithms.

The idea is to reproduce the human brain—the more intelligible the sensor data is for artificial intelligence algorithms, the more intelligent the mobility will be.

Continue reading on this subject

Will industrial strategies chart a new course for the future of Quebec industries?

The Quebec economy—and more broadly the world economy—faces multiple challenges: the climate crisis, supply chain problems, labour shortages, inflation, etc. Quebec has an abundant supply of resources, know-how, and expertise; however, the next points on its agenda should include structuring, developing, and planning industrial activities.

Read more

Telematic Data: the Key to a Successful Energy Transition

Fleet managers are currently facing the colossal challenge of the energy transition, in order to meet legislative requirements and societal demands. For most carriers, both in the passenger transport sector and in the last mile delivery sector, this green shift has already begun.

Read more

Ambition EST 2030 : a roadmap for propelling Quebec to the forefront of the electric and smart transportation industry by 2030

Propulsion Québec, the cluster for electric and smart transportation, is announcing Ambition EST 2030, a roadmap for the electric and smart transportation (EST) industry developed in partnership with Deloitte.

Read more

Energy transition, the challenge that is shaking up the trucking industry

The U.S. Department of Energy recently released a report containing a vehicle cost analysis for zero-emission medium and heavy-duty trucks.

Read more

The Mobility of the Future is Smart, Green, Inclusive and Healthy

Innovation is part of our Alstom in Motion 2025 strategy. It has brought us to where we are today, and we want to go even further. With the new scale and combined expertise, Alstom has doubled its innovation capacity and we will support this growth by doubling the financial investments in R&D, up to 600 million euros (875 million Canadian dollars) per year by 2024.

Read more

Astus – Proud to support IMPULSION MTL 2021

With more than 25 years of expertise in the field of vehicle telematics, Astus is now a key player in the energy and digital transition of mobility and transport.

Read more

LiDAR and AI in autonomous vehicle commercialization and functional safety

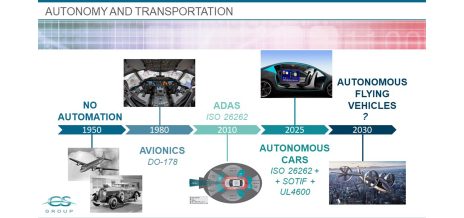

The story of smart, autonomous transportation began nearly half a century ago. In the early 1980s, avionics systems started to replace more traditional mechanical and hydraulic systems through calculators and embedded software.

Read more

Is there a regulatory framework for location data?

Analysing location data can help service providers assess whether to boost services in one part of a city versus another, at a particular time of day or in preparation for an upcoming festival, for instance. However, the data used for these analyses is linked to individuals and makes it possible to identify them.

Read more

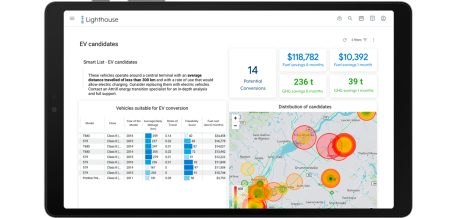

How to develop a successful strategy for your transition towards EVs

To this day, it is not uncommon to see a good number of companies relying on assumptions, sometimes non-global and inaccurate information reports in order to manage their vehicle fleet.

Read more

Five key Cybersecurity takeaways for the automotive industry

In our ever-changing connected world, digital transformation is affecting every aspect of our lives. The automotive industry is no exception to this evolution: our cars are becoming our best co-pilots, allowing us to plan our trips with real-time data or read and respond to text messages using voice command.

Read more